Cisco CCNA1 Chapter 9 некоторые выдержки

Ethernet operates across two layers of the OSI model. The model provides a reference to which Ethernet can be related but it is actually implemented in the lower half of the Data Link layer, which is known as the Media Access Control (MAC) sublayer, and the Physical layer only.

Ethernet at Layer 1 involves signals, bit streams that travel on the media, physical components that put signals on media, and various topologies. Ethernet Layer 1 performs a key role in the communication that takes place between devices, but each of its functions has limitations.

As the figure shows, Ethernet at Layer 2 addresses these limitations. The Data Link sublayers contribute significantly to technological compatibility and computer communications. The MAC sublayer is concerned with the physical components that will be used to communicate the information and prepares the data for transmission over the media.

The Logical Link Control (LLC) sublayer remains relatively independent of the physical equipment that will be used for the communication process.

Media Access Control (MAC) is the lower Ethernet sublayer of the Data Link layer. Media Access Control is implemented by hardware, typically in the computer Network Interface Card (NIC).

The Ethernet MAC sublayer has two primary responsibilities:

- Data Encapsulation

- Media Access Control

Data Encapsulation

Data encapsulation provides three primary functions:

- Frame delimiting

- Addressing

- Error detection

Media Access Control

The MAC sublayer controls the placement of frames on the media and the removal of frames from the media. As its name implies, it manages the media access control. This includes the initiation of frame transmission and recovery from transmission failure due to collisions.

Logical Topology

The underlying logical topology of Ethernet is a multi-access bus. This means that all the nodes (devices) in that network segment share the medium. This further means that all the nodes in that segment receive all the frames transmitted by any node on that segment.

Ethernet provides a method for determining how the nodes share access to the media. The media access control method for classic Ethernet is Carrier Sense Multiple Access with Collision Detection (CSMA/CD).

The success of Ethernet is due to the following factors:

- Simplicity and ease of maintenance

- Ability to incorporate new technologies

- Reliability

- Low cost of installation and upgrade

Ethernet Frame Size

Both the Ethernet II and IEEE 802.3 standards define the minimum frame size as 64 bytes and the maximum as 1518 bytes. This includes all bytes from the Destination MAC Address field through the Frame Check Sequence (FCS) field. The Preamble and Start Frame Delimiter fields are not included when describing the size of a frame. The IEEE 802.3ac standard, released in 1998, extended the maximum allowable frame size to 1522 bytes. The frame size was increased to accommodate a technology called Virtual Local Area Network (VLAN).

Frame Check Sequence Field

The Frame Check Sequence (FCS) field (4 bytes) is used to detect errors in a frame. It uses a cyclic redundancy check (CRC). The sending device includes the results of a CRC in the FCS field of the frame.

The receiving device receives the frame and generates a CRC to look for errors. If the calculations match, no error occurred. Calculations that do not match are an indication that the data has changed; therefore, the frame is dropped. A change in the data could be the result of a disruption of the electrical signals that represent the bits.

Data Link Layer

OSI Data Link layer (Layer 2) physical addressing, implemented as an Ethernet MAC address, is used to transport the frame across the local media. Although providing unique host addresses, physical addresses are non-hierarchical. They are associated with a particular device regardless of its location or to which network it is connected.

These Layer 2 addresses have no meaning outside the local network media. A packet may have to traverse a number of different Data Link technologies in local and wide area networks before it reaches its destination. A source device therefore has no knowledge of the technology used in intermediate and destination networks or of their Layer 2 addressing and frame structures.

Network Layer

Network layer (Layer 3) addresses, such as IPv4 addresses, provide the ubiquitous, logical addressing that is understood at both source and destination. To arrive at its eventual destination, a packet carries the destination Layer 3 address from its source. However, as it is framed by the different Data Link layer protocols along the way, the Layer 2 address it receives each time applies only to that local portion of the journey and its media.

In short:

The Network layer address enables the packet to be forwarded toward its destination.

The Data Link layer address enables the packet to be carried by the local media across each segment.

Ethernet, different MAC addresses are used for Layer 2 unicast, multicast, and broadcast communications.

A unicast MAC address is the unique address used when a frame is sent from a single transmitting device to single destination device. Broadcast

With a broadcast, the packet contains a destination IP address that has all ones (1s) in the host portion. This numbering in the address means that all hosts on that local network (broadcast domain) will receive and process the packet. Many network protocols, such as Dynamic Host Configuration Protocol (DHCP) and Address Resolution Protocol (ARP), use broadcasts.

Multicast

Recall that multicast addresses allow a source device to send a packet to a group of devices. Devices that belong to a multicast group are assigned a multicast group IP address. The range of multicast addresses is from 224.0.0.0 to 239.255.255.255. Because multicast addresses represent a group of addresses (sometimes called a host group), they can only be used as the destination of a packet. The source will always have a unicast address.

In a shared media environment, all devices have guaranteed access to the medium, but they have no prioritized claim on it. If more than one device transmits simultaneously, the physical signals collide and the network must recover in order for communication to continue.

Collisions are the cost that Ethernet pays to get the low overhead associated with each transmission.

Ethernet uses Carrier Sense Multiple Access with Collision Detection (CSMA/CD) to detect and handle collisions and manage the resumption of communications.

Because all computers using Ethernet send their messages on the same media, a distributed coordination scheme (CSMA) is used to detect the electrical activity on the cable. A device can then determine when it can transmit. When a device detects that no other computer is sending a frame, or carrier signal, the device will transmit, if it has something to send.

Carrier Sense

In the CSMA/CD access method, all network devices that have messages to send must listen before transmitting.

If a device detects a signal from another device, it will wait for a specified amount of time before attempting to transmit.

When there is no traffic detected, a device will transmit its message. While this transmission is occurring, the device continues to listen for traffic or collisions on the LAN. After the message is sent, the device returns to its default listening mode.

Multi-access

If the distance between devices is such that the latency of one device's signals means that signals are not detected by a second device, the second device may start to transmit, too. The media now has two devices transmitting their signals at the same time. Their messages will propagate across the media until they encounter each other. At that point, the signals mix and the message is destroyed. Although the messages are corrupted, the jumble of remaining signals continues to propagate across the media.

Collision Detection

When a device is in listening mode, it can detect when a collision occurs on the shared media. The detection of a collision is made possible because all devices can detect an increase in the amplitude of the signal above the normal level.

Once a collision occurs, the other devices in listening mode - as well as all the transmitting devices - will detect the increase in the signal amplitude. Once detected, every device transmitting will continue to transmit to ensure that all devices on the network detect the collision. Jam Signal and Random Backoff

Once the collision is detected by the transmitting devices, they send out a jamming signal. This jamming signal is used to notify the other devices of a collision, so that they will invoke a backoff algorithm. This backoff algorithm causes all devices to stop transmitting for a random amount of time, which allows the collision signals to subside.

After the delay has expired on a device, the device goes back into the "listening before transmit" mode. A random backoff period ensures that the devices that were involved in the collision do not try to send their traffic again at the same time, which would cause the whole process to repeat. But, this also means that a third device may transmit before either of the two involved in the original collision have a chance to re-transmit.

Latency

As discussed, each device that wants to transmit must first "listen" to the media to check for traffic. If no traffic exists, the station will begin to transmit immediately. The electrical signal that is transmitted takes a certain amount of time (latency) to propagate (travel) down the cable. Each hub or repeater in the signal's path adds latency as it forwards the bits from one port to the next.

This accumulated delay increases the likelihood that collisions will occur because a listening node may transition into transmitting signals while the hub or repeater is processing the message. Because the signal had not reached this node while it was listening, it thought that the media was available. This condition often results in collisions.

Timing and Synchronization

In half-duplex mode, if a collision has not occurred, the sending device will transmit 64 bits of timing synchronization information, which is known as the Preamble.

The sending device will then transmit the complete frame.

Ethernet with throughput speeds of 10 Mbps and slower are asynchronous. An asynchronous communication in this context means that each receiving device will use the 8 bytes of timing information to synchronize the receive circuit to the incoming data and then discard the 8 bytes.

Ethernet implementations with throughput of 100 Mbps and higher are synchronous. Synchronous communication in this context means that the timing information is not required. However, for compatibility reasons, the Preamble and Start Frame Delimiter (SFD) fields are still present.

Bit Time

For each different media speed, a period of time is required for a bit to be placed and sensed on the media. This period of time is referred to as the bit time. On 10-Mbps Ethernet, one bit at the MAC layer requires 100 nanoseconds (nS) to transmit. At 100 Mbps, that same bit requires 10 nS to transmit. And at 1000 Mbps, it only takes 1 nS to transmit a bit. As a rough estimate, 20.3 centimeters (8 inches) per nanosecond is often used for calculating the propagation delay on a UTP cable. The result is that for 100 meters of UTP cable, it takes just under 5 bit times for a 10BASE-T signal to travel the length the cable.

For CSMA/CD Ethernet to operate, the sending device must become aware of a collision before it has completed transmission of a minimum-sized frame. At 100 Mbps, the device timing is barely able to accommodate 100 meter cables. At 1000 Mbps, special adjustments are required because nearly an entire minimum-sized frame would be transmitted before the first bit reached the end of the first 100 meters of UTP cable. For this reason, half-duplex mode is not permitted in 10-Gigabit Ethernet.

These timing considerations have to be applied to the interframe spacing and backoff times (both of which are discussed in the next section) to ensure that when a device transmits its next frame, the risk of a collision is minimized.

Slot Time

In half-duplex Ethernet, where data can only travel in one direction at once, slot time becomes an important parameter in determining how many devices can share a network. For all speeds of Ethernet transmission at or below 1000 Mbps, the standard describes how an individual transmission may be no smaller than the slot time.

Determining slot time is a trade-off between the need to reduce the impact of collision recovery (backoff and retransmission times) and the need for network distances to be large enough to accommodate reasonable network sizes. The compromise was to choose a maximum network diameter (about 2500 meters) and then to set the minimum frame length long enough to ensure detection of all worst-case collisions.

Slot time for 10- and 100-Mbps Ethernet is 512 bit times, or 64 octets.

Slot time for 1000-Mbps Ethernet is 4096 bit times, or 512 octets.

The slot time ensures that if a collision is going to occur, it will be detected within the first 512 bits (4096 for Gigabit Ethernet) of the frame transmission. This simplifies the handling of frame retransmissions following a collision.

Slot time is an important parameter for the following reasons: The 512-bit slot time establishes the minimum size of an Ethernet frame as 64 bytes. Any frame less than 64 bytes in length is considered a "collision fragment" or "runt frame" and is automatically discarded by receiving stations.

The slot time establishes a limit on the maximum size of a network's segments. If the network grows too big, late collisions can occur. Late collisions are considered a failure in the network because the collision is detected too late by a device during the frame transmission to be automatically handled by CSMA/CD.

Slot time is calculated assuming maximum cable lengths on the largest legal network architecture. All hardware propagation delay times are at the legal maximum and the 32-bit jam signal is used when collisions are detected.

The actual calculated slot time is just longer than the theoretical amount of time required to travel between the furthest points of the collision domain, collide with another transmission at the last possible instant, and then have the collision fragments return to the sending station and be detected.

For the system to work properly, the first device must learn about the collision before it finishes sending the smallest legal frame size.

To allow 1000 Mbps Ethernet to operate in half-duplex mode, the extension field was added to the frame when sending small frames purely to keep the transmitter busy long enough for a collision fragment to return. This field is present only on 1000-Mbps, half-duplex links and allows minimum-sized frames to be long enough to meet slot time requirements. Extension bits are discarded by the receiving device.

Interframe Spacing

The Ethernet standards require a minimum spacing between two non-colliding frames. This gives the media time to stabilize after the transmission of the previous frame and time for the devices to process the frame. Referred to as the interframe spacing, this time is measured from the last bit of the FCS field of one frame to the first bit of the Preamble of the next frame.

After a frame has been sent, all devices on a 10 Mbps Ethernet network are required to wait a minimum of 96 bit times (9.6 microseconds) before any device can transmit its next frame. On faster versions of Ethernet, the spacing remains the same - 96 bit times - but the interframe spacing time period grows correspondingly shorter.

Synchronization delays between devices may result in the loss of some of frame preamble bits. This in turn may cause minor reduction of the interframe spacing when hubs and repeaters regenerate the full 64 bits of timing information (the Preamble and SFD) at the start of every frame forwarded. On higher speed Ethernet some time sensitive devices could potentially fail to recognize individual frames resulting in communication failure.

Jam Signal

As you will recall, Ethernet allows all devices to compete for transmitting time. In the event that two devices transmit simultaneously, the network CSMA/CD attempts to resolve the issue. But remember, when a larger number of devices are added to the network, it is possible for the collisions to become increasingly difficult to resolve.

As soon as a collision is detected, the sending devices transmit a 32-bit "jam" signal that will enforce the collision. This ensures all devices in the LAN to detect the collision.

It is important that the jam signal not be detected as a valid frame; otherwise the collision would not be identified. The most commonly observed data pattern for a jam signal is simply a repeating 1, 0, 1, 0 pattern, the same as the Preamble.

The corrupted, partially transmitted messages are often referred to as collision fragments or runts. Normal collisions are less than 64 octets in length and therefore fail both the minimum length and the FCS tests, making them easy to identify.

Backoff Timing

After a collision occurs and all devices allow the cable to become idle (each waits the full interframe spacing), the devices whose transmissions collided must wait an additional - and potentially progressively longer - period of time before attempting to retransmit the collided frame. The waiting period is intentionally designed to be random so that two stations do not delay for the same amount of time before retransmitting, which would result in more collisions. This is accomplished in part by expanding the interval from which the random retransmission time is selected on each retransmission attempt. The waiting period is measured in increments of the parameter slot time.

If media congestion results in the MAC layer unable to send the frame after 16 attempts, it gives up and generates an error to the Network layer. Such an occurrence is rare in a properly operating network and would happen only under extremely heavy network loads or when a physical problem exists on the network.

The methods described in this section allowed Ethernet to provide greater service in a shared media topology based on the use of hubs. In the coming switching section, we will see how, with the use of switches, the need for CSMA/CD starts to diminish or, in some cases, is removed altogether. Nodes are Connected Directly

In a LAN where all nodes are connected directly to the switch, the throughput of the network increases dramatically. The three primary reasons for this increase are:

- Dedicated bandwidth to each port

- Collision-free environment

- Full-duplex operation

- Switch Operation

To accomplish their purpose, Ethernet LAN switches use five basic operations:

- Learning

- Aging

- Flooding

- Selective Forwarding

- Filtering

The ARP protocol provides two basic functions:

- Resolving IPv4 addresses to MAC addresses

- Maintaining a cache of mappings

Security

In some cases, the use of ARP can lead to a potential security risk. ARP spoofing, or ARP poisoning, is a technique used by an attacker to inject the wrong MAC address association into a network by issuing fake ARP requests. An attacker forges the MAC address of a device and then frames can be sent to the wrong destination.

Manually configuring static ARP associations is one way to prevent ARP spoofing. Authorized MAC addresses can be configured on some network devices to restrict network access to only those devices listed.

Summary

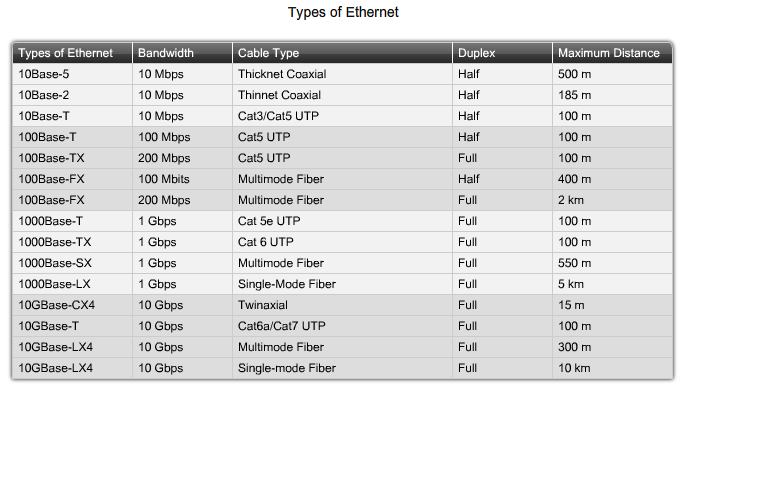

Ethernet is an effective and widely used TCP/IP Network Access protocol. Its common frame structure has been implemented across a range of media technologies, both copper and fiber, making the most common LAN protocol in use today.

As an implementation of the IEEE 802.2/3 standards, the Ethernet frame provides MAC addressing and error checking. Being a shared media technology, early Ethernet had to apply a CSMA/CD mechanism to manage the use of the media by multiple devices. Replacing hubs with switches in the local network has reduced the probability of frame collisions in half-duplex links. Current and future versions, however, inherently operate as full-duplex communications links and do not need to manage media contention to the same detail.

The Layer 2 addressing provided by Ethernet supports unicast, multicast, and broadcast communications. Ethernet uses the Address Resolution Protocol to determine the MAC addresses of destinations and map them against known Network layer addresses.

Размещено в разделе Cisco

25.10.2009

Комментарии:

Нет записей, оставьте свой комментарий.